Generating a technical synopsis can often feel like trying to condense a thousand-page manual into a single, punchy paragraph without losing the vital “why” and “how.” If you’ve ever asked an AI to summarize a complex white paper only to receive a generic, fluffy response, you know the struggle. This is where Few-Shot Learning becomes your secret weapon.

By providing a few high-quality examples within your prompt, you guide the model’s logic and formatting. Think of it as showing a new chef three perfectly plated dishes before asking them to cook; it’s much more effective than just handing them a recipe.

Understanding Few-Shot Learning in a Technical Context

At its core, Few-Shot Learning is a technique where we provide a Large Language Model (LLM) with a small set of training examples—usually two to five—directly in the prompt. This leverages the model’s ability for pattern matching to understand the specific tone, length, and technical depth you require.

Why Zero-Shot Often Fails

When you use “Zero-Shot” (asking for a task with no examples), the AI relies on its broad training data. This often results in a “hallucination of tone,” where the AI sounds too corporate or overly simplistic. For technical synopses, you need precision. You need the AI to understand that “latency” is more important than “user-friendly interface” in a backend systems report.

The Role of In-Context Examples

In-context examples serve as a lighthouse. They anchor the model’s output to a specific structure. If your examples use a “Problem-Solution-Impact” framework, the AI will instinctively follow that same path. This reduces the need for long, wordy instructions and lets the examples do the heavy lifting.

How to Structure Your Few-Shot Prompt

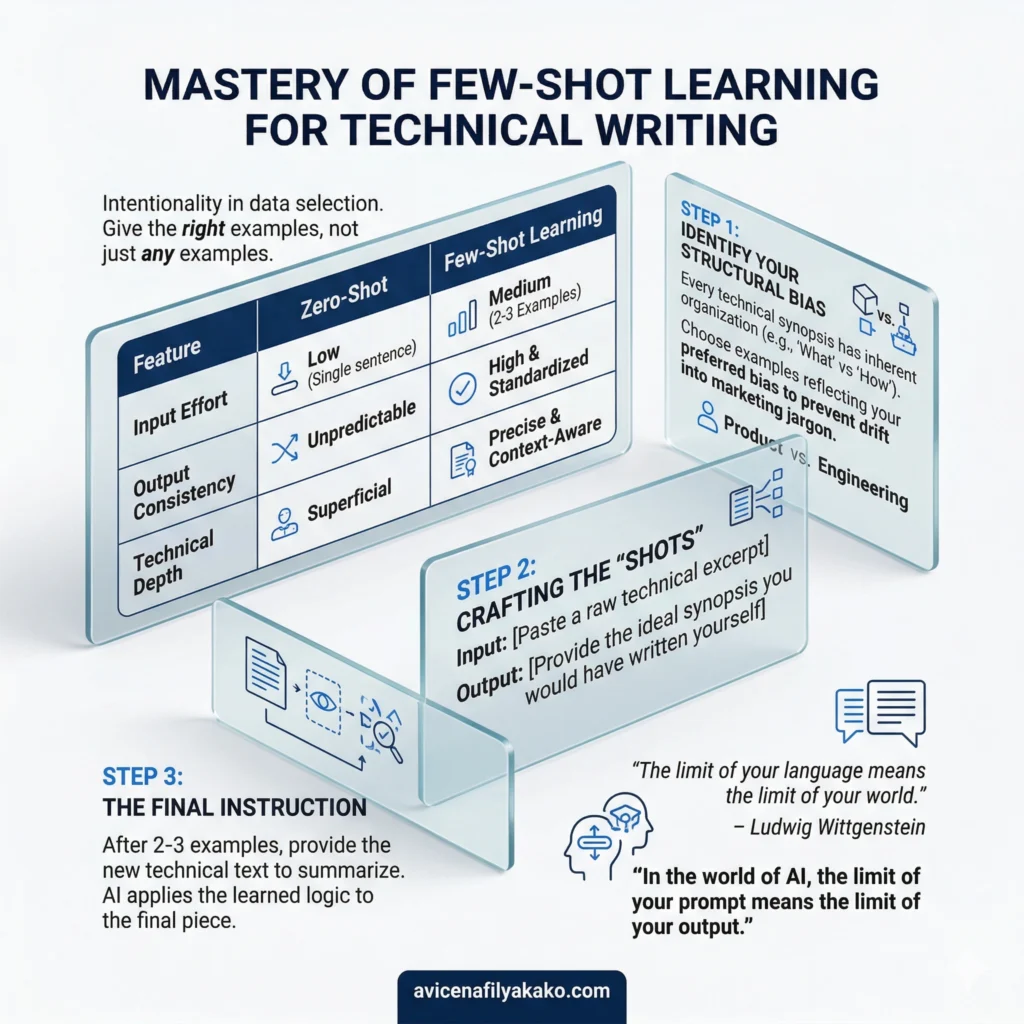

To master Few-Shot Learning for technical writing, you need to be intentional about the data you feed the prompt. It isn’t just about giving examples; it’s about giving the right examples.

| Feature | Zero-Shot | Few-Shot Learning |

|---|---|---|

| Input Effort | Low (Single sentence) | Medium (2-3 Examples) |

| Output Consistency | Unpredictable | High & Standardized |

| Technical Depth | Superficial | Precise & Context-Aware |

1. Identify Your Structural Bias

Every technical synopsis has a structural bias. This is the inherent way information is organized. Are you focusing on the “What” (the product) or the “How” (the engineering)? By selecting examples that reflect your preferred bias, you ensure the AI doesn’t drift into irrelevant marketing jargon.

2. Crafting the “Shots”

When building your prompt, follow this template for each example:

- Input: [Paste a raw technical excerpt]

- Output: [Provide the ideal synopsis you would have written yourself]

3. The Final Instruction

After your 2-3 examples, provide the new technical text you want summarized. Because of the model’s pattern matching capabilities, it will look at the previous pairs and apply the same logic to the final piece.

“The limit of your language means the limit of your world.” — Ludwig Wittgenstein

In the world of AI, the limit of your prompt means the limit of your output.

Overcoming Common Hurdles in Technical Summarization

While Few-Shot Learning is powerful, it isn’t magic. You still need to account for how AI interprets data. One major factor is how the model handles complex variables or specialized terminology.

Managing Pattern Matching

LLMs are excellent at mimicking the look of your examples. However, if your examples are all about cloud computing and you suddenly ask for a synopsis of a biotech paper, the model might try to apply cloud computing terminology to biology. Always ensure your in-context examples are topically relevant to the task at hand.

Practical Tips for Better Synopses:

- Keep it diverse: Use examples of varying lengths so the AI understands it should adapt to the input size.

- Highlight “Negative” constraints: Sometimes, telling the AI what not to include in the examples is just as helpful.

- Focus on Entities: Ensure your examples highlight key semantic keywords like “scalability,” “throughput,” or “cryptographic signatures” to signal their importance.

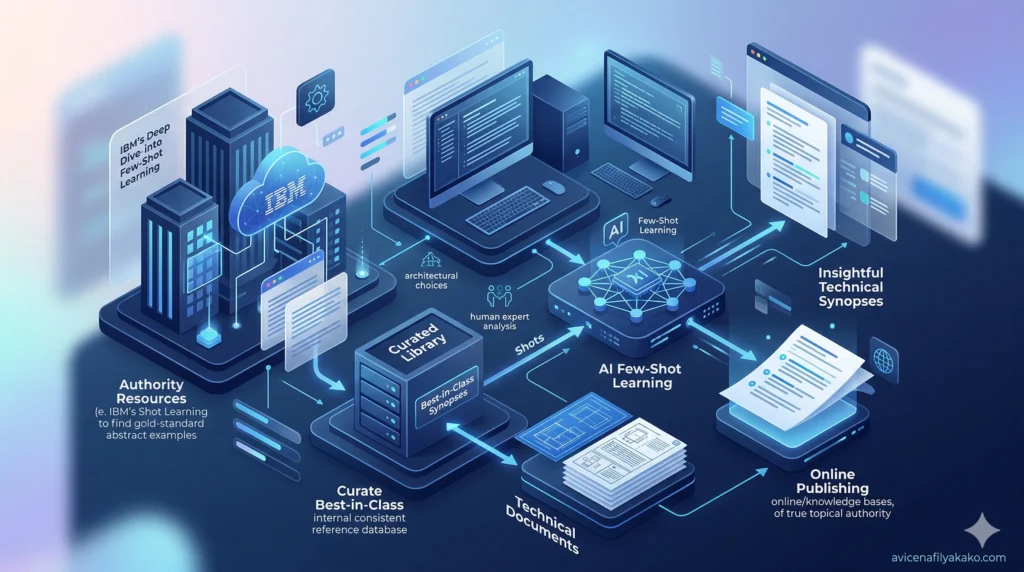

Maximizing Topical Authority with AI

To build true topical authority, your technical synopses must be more than just short; they must be insightful. By using Few-Shot Learning, you can train the AI to identify the “unspoken” value in a technical document—the subtle architectural choices that a human expert would notice.

For instance, you might link to high-authority resources, such as IBM’s deep dive into how few-shot learning works, to find gold-standard abstracts that can serve as your “shots.” Internally, you should maintain a library of “Best-in-Class” synopses to use as a consistent reference for your prompts.

Check out our guide on “Advanced Prompt Engineering for Developers” for more tips.]

FAQ: Few-Shot Prompting Explained

What is the main benefit of Few Shot Learning for SEO writers?

Few Shot Learning allows writers to enforce a specific brand voice and technical depth that Zero-Shot prompting cannot achieve, leading to higher-quality content that satisfies user intent. It eliminates the “robotic” feel of AI-generated summaries.

How many examples are needed for an effective Few Shot prompt?

Typically, 2 to 5 examples are sufficient to establish a clear pattern for the AI to follow. Providing more than five often leads to diminishing returns and can consume too much of the model’s “context window.”

Does Few Shot Learning work for all AI models?

Yes, most modern LLMs (like Gemini, GPT-4, and Claude) are designed to excel at Few-Shot Learning as it is a core component of their reasoning capabilities. However, the more advanced the model, the better it handles the nuances of your examples.

Disclaimer: The information provided in this article is for educational and general informational purposes only and should not be construed as professional advice (such as legal, medical, or financial). While the author strives to provide accurate and up-to-date information, no representations or warranties are made regarding its completeness or reliability. Any action you take based on this information is strictly at your own risk.

This article was authored by Avicena Fily A Kako, a Digital Entrepreneur & SEO Specialist using AI to scale business and finance projects.