If you have ever caught an AI confidently asserting a “fact” that was completely made up, you have witnessed a hallucination. It’s the equivalent of a friend telling a story with such conviction that you don’t realize they’re dreaming until you check the details. As we rely more on Large Language Models (LLMs), the need for Chain of Verification (CoVe) has become paramount.

I look at Chain of Verification as the “double-check” phase of digital thinking. Instead of letting an AI provide a one-and-done answer, we implement a multi-step process that forces the model to audit its own logic. This approach is essential for anyone using AI for research, technical writing, or data analysis where accuracy is non-negotiable.

Why Hallucinations Happen: The Logic Loop Problem

LLMs are essentially sophisticated word predictors. They don’t “know” facts; they calculate the probability of the next token. Sometimes, the path of highest probability leads right into a logic loop or a factual error.

“The problem with AI is not that it is ‘too smart,’ but that it is often too confident in its ignorance.” — Adapted from various industry observations on LLM reliability.

By using Chain of Verification, you break the cycle of blind prediction. You are essentially asking the AI to slow down, look at its work, and find its own mistakes before it presents the final result to you.

The 4-Step Architecture of Chain of Verification

Implementing Chain of Verification isn’t about a single prompt; it’s a workflow. Here is how I break down the architecture to ensure maximum hallucination reduction:

- Draft Initial Response: The AI generates a baseline answer to your query.

- Plan Verification Questions: The AI identifies the factual claims in its draft and generates questions to verify them.

- Execute Independent Verification: Each question is answered separately, often in a fresh context, to prevent “knowledge contamination.”

- Final Verified Output: The AI compares the initial draft against the verified facts and produces a corrected response.

Comparison: Standard Prompting vs. Chain of Verification

| Feature | Standard Prompting | Chain of Verification |

|---|---|---|

| Accuracy | Variable (Prone to hallucinations) | High (Self-corrected) |

| Process | Single-shot generation | Multi-stage auditing |

| Logic Type | Linear prediction | Logic loops & Self-correction |

How to Implement Chain of Verification in Your Workflow

You don’t need to be a developer to use Chain of Verification. You can implement it manually through a structured “meta-prompting” technique. Here is a practical guide on how you can apply this today.

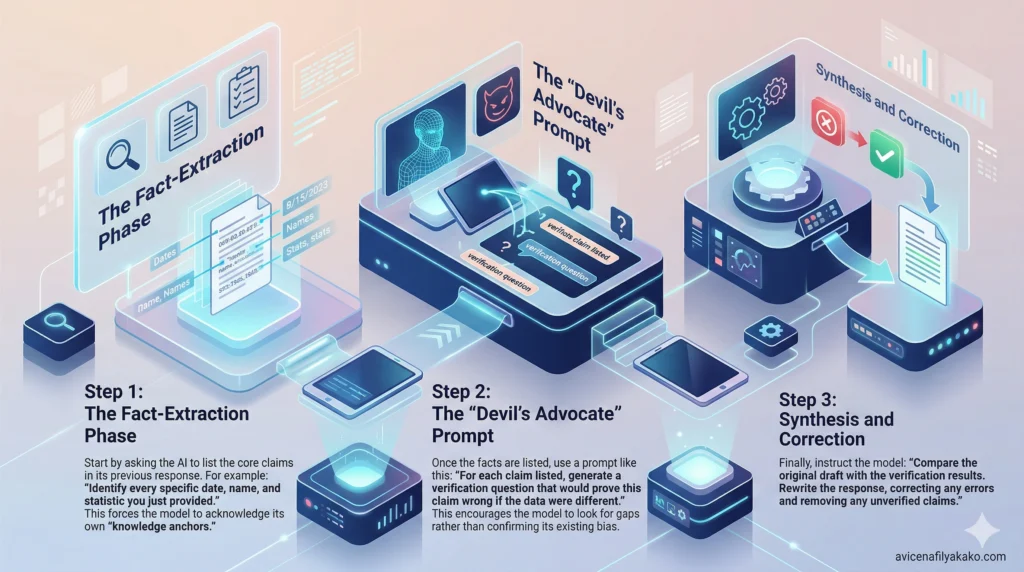

Step 1: The Fact-Extraction Phase

Start by asking the AI to list the core claims in its previous response. For example: “Identify every specific date, name, and statistic you just provided.” This forces the model to acknowledge its own “knowledge anchors.”

Step 2: The “Devil’s Advocate” Prompt

Once the facts are listed, use a prompt like this: “For each claim listed, generate a verification question that would prove this claim wrong if the data were different.” This encourages the model to look for gaps rather than confirming its existing bias.

Step 3: Synthesis and Correction

Finally, instruct the model: “Compare the original draft with the verification results. Rewrite the response, correcting any errors and removing any unverified claims.”

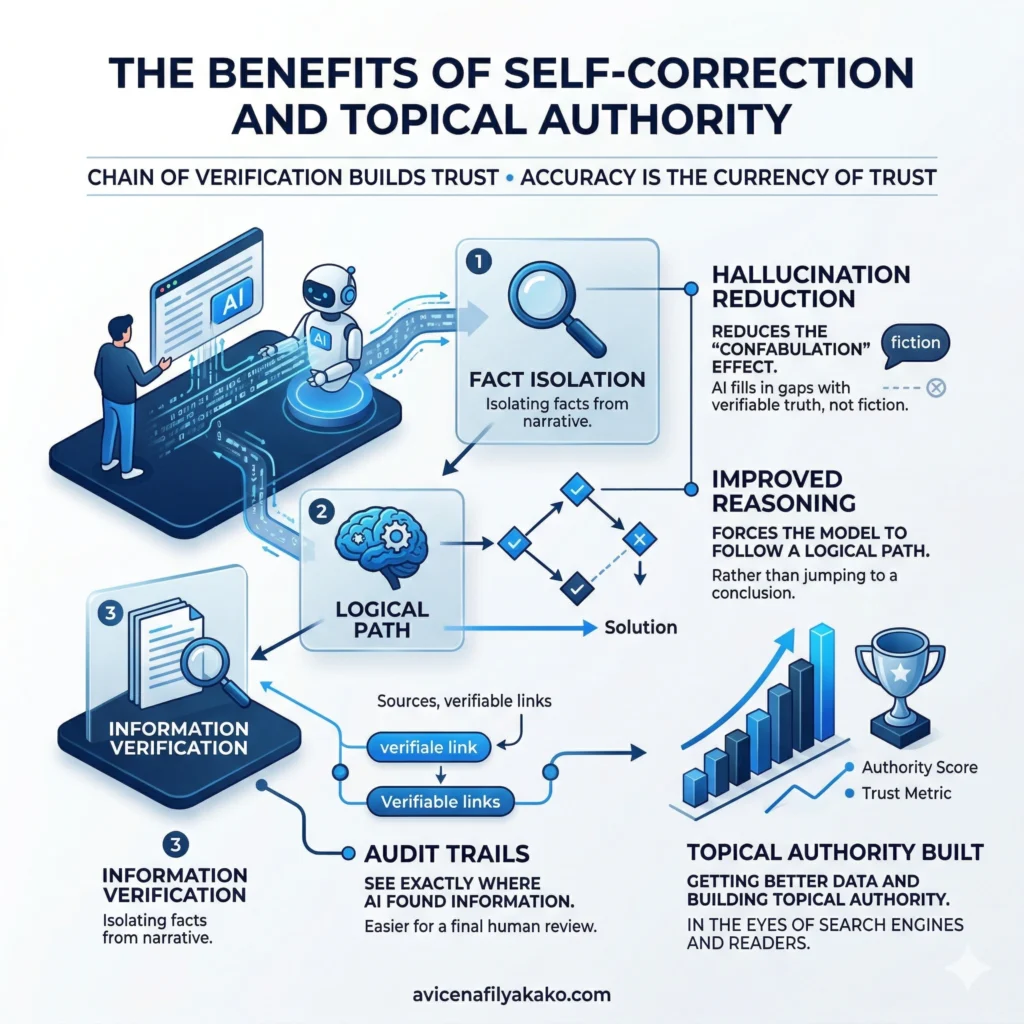

The Benefits of Self-Correction and Topical Authority

When you use Chain of Verification, you aren’t just getting better data; you are building topical authority. In the eyes of search engines and readers, accuracy is the currency of trust.

- Hallucination Reduction: By isolating facts from the narrative, you reduce the “confabulation” effect where the AI fills in gaps with fiction.

- Improved Reasoning: The process forces the model to follow a logical path rather than jumping to a conclusion.

- Audit Trails: You can see exactly where the AI found its information, making it easier for you to perform a final human review.

Research Paper on Chain-of-Verification from Cornell University

FAQs on Chain of Verification

Does Chain of Verification eliminate all AI errors?

No, while Chain of Verification significantly reduces hallucinations, it cannot guarantee 100% accuracy if the underlying training data is flawed. It is a tool for self-correction and logic-checking, but human oversight remains the final line of defense for critical information.

Is CoVe the same as Chain of Thought (CoT) prompting?

CoVe is a distinct evolution of Chain of Thought that focuses specifically on fact-checking rather than just reasoning steps. While CoT helps a model “think” through a problem, Chain of Verification forces the model to “fact-check” its thoughts after they are formed.

Can I use CoVe for creative writing?

Yes, you can use Chain of Verification in creative contexts to maintain internal consistency in world-building or character history. It helps prevent “plot holes” by verifying that a new scene doesn’t contradict facts established earlier in your story or script.

Summary of Best Practices

To get the most out of Chain of Verification, keep these tips in mind:

- Be Granular: The more specific the verification questions, the better the result.

- Isolate Tasks: If possible, perform the verification in a new chat window to avoid the model trying to “agree” with its previous mistakes.

- Human-in-the-Loop: Use CoVe to do the heavy lifting, but always verify the final statistics yourself.

By integrating these habits, you transform AI from a risky collaborator into a precise research powerhouse.

Disclaimer: The information provided in this article is for educational and general informational purposes only and should not be construed as professional advice (such as legal, medical, or financial). While the author strives to provide accurate and up-to-date information, no representations or warranties are made regarding its completeness or reliability. Any action you take based on this information is strictly at your own risk.

This article was authored by Avicena Fily A Kako, a Digital Entrepreneur & SEO Specialist using AI to scale business and finance projects.